The Silicon Valley startup has just raised an astonishing $640M in additional funding, and is valued at $2.8B. Have they captured AI Lightning in a bottle?

Groq has announced a $640M Series D round at a valuation of $2.8B, led by BlackRock Private Equity Partners. Founded by one of the developers of the original Google TPU, Jonathan Ross, Groq has shifted towards extremely fast and large-scale inference processing based on the Language Processing Unit which Ross pioneered. The additional investment will enable Groq to accelerate the next two generations of LPUs.

“You can’t power AI without inference compute,” said Jonathan Ross, CEO and Founder of Groq. “We intend to make the resources available so that anyone can create cutting-edge AI products, not just the largest tech companies. This funding will enable us to deploy more than 100,000 additional LPUs into GroqCloud.”

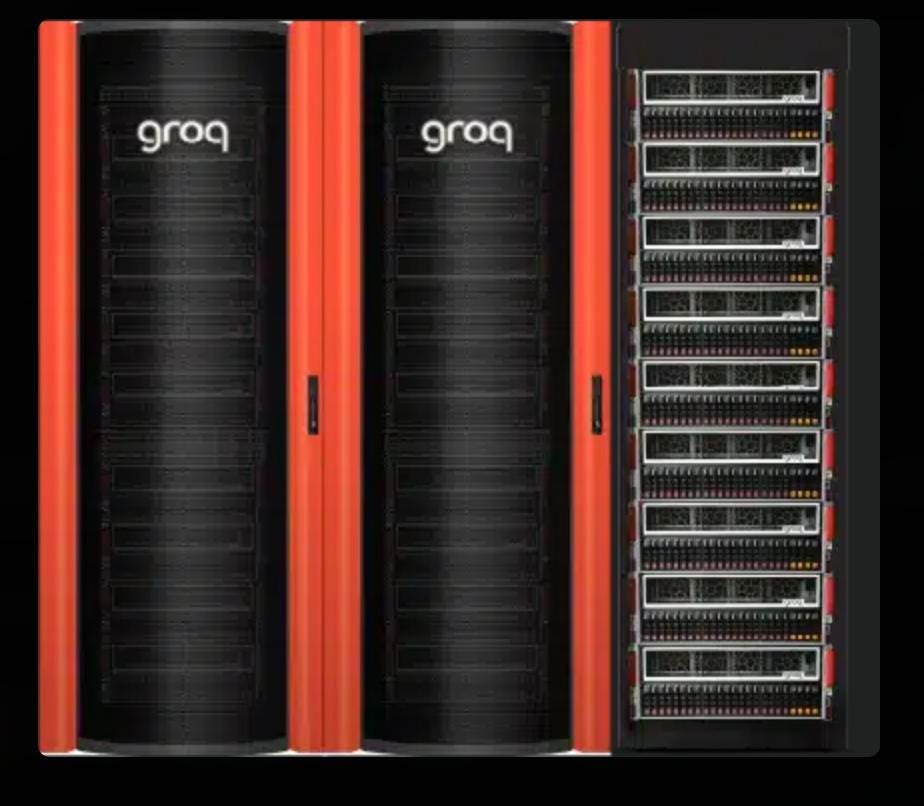

What Does Groq Offer?

The Groq LPU is a single core processor designed for LLMs, interconnected with a fast switchless routing fabric using 288 QSFP28 optical cables. A rack is built from 9 GroqNode 1 servers (1 server acts as a redundant resource) with a fully connected internal RealScale network delivering accelerated compute performance up to 48 Peta OPs (INT8), or 12 PFLOPs (FP16). Groq has championed its open-source approach, fully embracing models such as Meta’s Llama 3.1. By providing access to LPUs on the GroqCloud demonstrating its amazing performance, the company has built a loyal following, with over 70,000 developers using the GroqCloud to create applications.

What’s Groq’s claim to fame? Extremely fast inference. How do they do it? SRAM.

Unlike AI GPU’s from Nvidia and AMD, Groq uses on-chip SRAM, with 14GB of high bandwidth shared memory for weights across the rack. SRAM is some 100x faster than the HBM memory used by GPUs. While 14GB of SRAM in a rack is dwarfed by the HBM in a rack of GPUs, the Groq LPU’s SRAM is particularly effective in inference tasks where speed and efficiency are paramount. For appropriately-sized models, the faster SRAM and optical switchless fabric can generate some 500-750 tokens per second. Thats amazingly fast, and you just have to go to their website to see it live (its free!). To put things into perspective, ChatGPT with GPT-3.5 can only generate around 40 tokens/s. Now you can see why they call their cloud access “Tokens as a service”.

Unfortunately, there is no free lunch. SRAM is far more expensive than DRAM or even HBM, contributing to the high cost of Groq LPU cards, which are priced at $20,000 each. More importantly, SRAM is 3 orders of magnitude smaller than a GPU’s HBM3e. So, this platform can’t do trainng, and can only hold an LLM if it is relatively small.

But smaller models are in fashion of late. And if your model is, say, 70B parameters or less, you would do well to check it out on Groq. Can you read 500 words (tokens) per minute? No. But inference processing is becoming inter-connected, with the output of one query being used as the input to the next. In this world, Groq brings a lot to the table.

Conclusions

If you want to compete against Nvidia, you better have something very different than just another GPU. Cerebras has a completely different approach in Training. Groq has amazingly low latency in inference, albeit for smaller models. With the new funding in place, Groq can move to 4nm manufacturing to support larger models, probably in the next year. Note that Groq also doesn’t have the same supply issues Nvidia faces; they use Global Foundries, not TSMC.

LLM Inference processing is starting to take off. Most recently, Nvidia said that 40% of their data center GPU revenue last quarter came from inference processing.

Disclosures: This article expresses the author’s opinions and

should not be taken as advice to purchase from or invest in the companies mentioned. Cambrian-AI Research is fortunate to have many, if not most, semiconductor firms as our clients, including Blaize, BrainChip, Cadence Design, Cerebras, D-Matrix, Eliyan, Esperanto, GML, Groq, IBM, Intel, NVIDIA, Qualcomm Technologies, Si-Five, SiMa.ai, Synopsys, Ventana Microsystems, Tenstorrent and scores of investment clients. Of the companies mentioned in this article, Cambrian-AI has had or currently has paid business relationships with Groq, Cerebras, and Nvidia. For more information, please visit our website at https://cambrian-AI.com.

Read the full article here